Back in February I started testing the NeoStack AI Plugin by BETIDE STUDIO for AI assisted Unreal Engine game development. The NeoStack AI plugin is still in Beta, and I am currently testing the latest beta version (v1.0.66).

In addition to using OpenRouter to run free LLM models, NeoStack AI recently added the capability to run my free local LLM models via Ollama running on my GeForce RTX-3080.

I am really excited to use their new Ollama Local LLM model capability so I can take advantage of the free processing power of my offline NVIDIA GeForce RTX-3080 Graphics card.

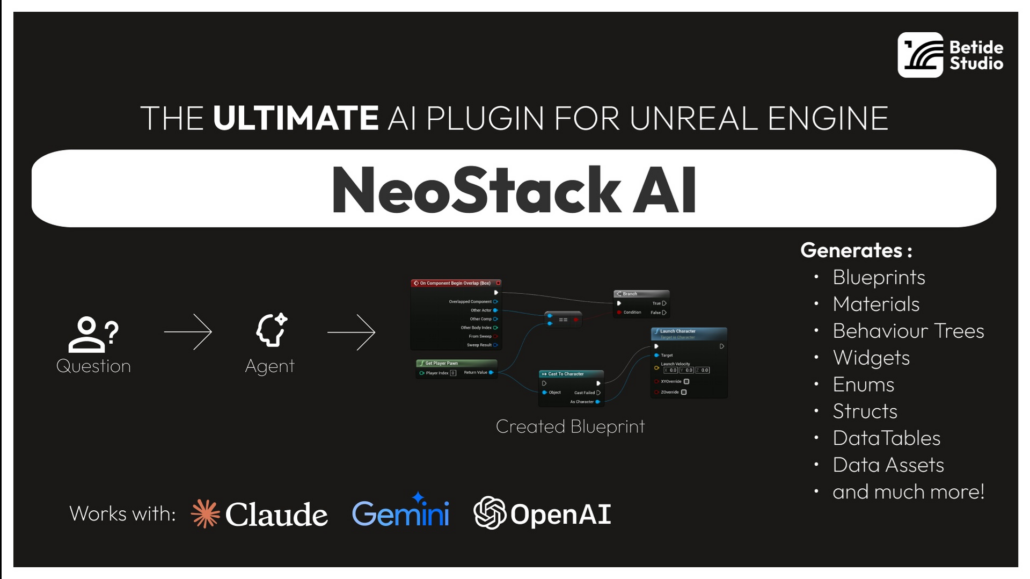

As a reminder, the key features of the NeoStack AI are:

- Multi-Agent Support – Connect to Claude Code, Gemini CLI, or OpenRouter

- Native Editor UI – Slate-based chat window with streaming responses

- Asset Generation – Create Blueprints, Materials, Behavior Trees, Data Tables and more

- Context Attachments – Attach Blueprint nodes or assets to your prompts

- Project Indexing – Automatic project indexing for context-aware suggestions

I’ll continue to keep you update on both my cloud and local LLM testing with NeoStack AI for Unreal Engine.