Using the GLM 4.7V Flash Local LLM Model by Z.ai to Develop a Moon Landing Simulation Using C# on my Alienware Aurora R11 RTX-3080 10GB Video Card

The GLM-4.7 Flash model, an Open Weight 30B-parameter Mixture of Agents (MoE) variant, released by Beijing Zhipu Huazhang Technology Co., Ltd on January 19, 2026, is positioned as a lightweight, efficient option for local deployment and agentic tasks like coding. The Lunar Lander coding simulation coding test ran without any problems at 6.12 tokens per […]

Using GLM 4.6V Flash Local LLM Model by Z.ai to Develop a Moon Landing Simulation Using C# on my Alienware Aurora R11 RTX-3080 10GB Video Card

Over the past 6 months, I have continued to test locally hosted open-source multimodal agentic models which could run comfortably on my Nvidia RTX 3080 with 10GB. Back in the August, I added GLM 4.5 to my testbench as it was surpassing or matching DeepSeek V3, Qwen 2.5 Coder, and Llama 3.1 in benchmarks. At […]

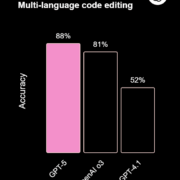

OpenAI Releases GPT-5 and it is State of the Art (SOTA) across Key Coding Benchmarks

OpenAI released GPT‑5 today and it is now state-of-the-art (SOTA) across key coding benchmarks, scoring 74.9% on SWE-bench Verified and 88% on Aider polyglot. SWE-bench Verified: (Tests AI models on real-world GitHub issues, evaluating their ability to generate accurate code patches) GPT-5 with Thinking (High) scored highest with 74.9%, followed closely by Claude Opus 4.1 […]